First, some context. We are a web services company, and it’s very important to us that all of our websites stay online at all times. Uptime monitoring isn’t a new or novel problem, but the existing services that we tried had some, let’s say, limitations. For one thing, they usually wait for a website to be down for 15 minutes or more before sending an alert (we send an alert within 5 minutes of the site going down). They also don’t say what the HTTP error code was and don’t give us useful debugging information. When a server is returning 5xx errors, we want to see what the user sees; we want a screenshot, or at least an attempt at one. I’ve come to realize that services which try to be as cheap as possible simply can’t afford to provide this; web browsers are resource-intensive pieces of software, and no one wants to run one just so they can provide us with a screenshot.

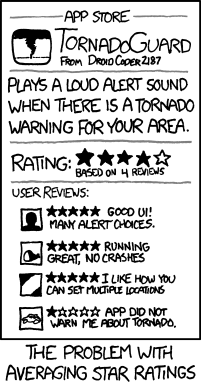

I think this XKCD explains the problem pretty well; credit to Randall Monroe:

An uptime monitor is only good if it actually lets you know about outages. Ours sure lets us know!

So how common are outages, really?

Well it depends on the site. There are some websites that expect to have downtime, like solar.lowtechmagazine.com. For them, brief outages once in a while are expected and considered an acceptable tradeoff in service of other goals. At time of writing that website has 98.84% uptime, which is probably fine for their purposes.

Non-profit websites like archive.org have limited resources and are probably doing as well as anyone could with the budget they have. I’m sure they’d prefer not to go offline, but they need to balance that with other considerations. They have a 99.27% uptime at time of writing.

But what about big sites from multi-billion-dollar companies, surely those stay online all the time, right? Well, not really. At time of writing www.office.com, the website for Microsoft’s flagship office suite, has an abysmal 97.85% uptime. That’s right, their uptime is worse than a website running from an off-grid solar installation.

I want to stress this; we’ve been monitoring most of these sites for only about 30 days. In other words, not only do they have downtime, they have downtime regularly.

Wait, do they not care? Or do they not know?

I would hope that Microsoft has in-house monitoring that lets them know about problems, but if not they’re welcome to sign up for ruup.site and we’d be happy to send them alerts!

It’s possible, if unlikely, that they’re waiting for users to complain before taking any action. Most downtime events are short, and maybe they’re hoping the issue will resolve itself before they need to do anything. I know that I wouldn’t consider that acceptable, but maybe Microsoft does.

Let’s assume that they know about at least the large outages that last over 30 minutes (of which we have logged 4 in the last 30 days). It’s hard to be charitable here; they have the resources to fix this, and yet they keep having downtime. I’ll let you draw your own conclusions.

Maybe it’s just Microsoft?

Microsoft is an unusually bad hosting provider, which is why I pointed to them specifically, but to a lesser extent this impacts lots of websites. codeberg.org is a direct competitor to Microsoft’s Github, and surely uses different hosting, but it also has frequent downtime. Cloudflare doesn’t save you, either; if your home page is a Bad gateway error from Cloudflare, well, to me that’s being down.

On other sites, we’ve also seen a few real-time misconfiguration errors, including the lovely message “Additionally, a 500 Internal Server Error error was encountered while trying to use an ErrorDocument to handle the request.” It usually gets fixed in a few minutes, but our monitoring absolutely notices when that happens.

Is this just a fact of life, and does this happen to all websites?

Nope! There’s absolutely no reason that your websites need to go offline in 2026. You should be able to get 99.99% uptime without any problems, and if you have redundant failover then even 100% is not unachievable. This website, peaksite.dev, currently has 100% uptime. Big sites can do this too; our payment processor, stripe.com also has 100% uptime.

Not only is this not an impossible goal, it’s not even a particularly hard one.

Your turn

So, what’s your website’s uptime? Do you know? Do you have monitoring? How much do you trust it to tell you when there’s a problem?

If you’re not sure, sign up for ruup.site; we’ll let you monitor up to 5 domains for free, and send you alerts when your website goes down. Obviously we’re biased, but we’ve also done a lot of testing and can say with confidence that our solution works.

And if you currently don’t have any kind of monitoring, get something as soon as possible, whether that’s from us or from someone else. If you want to minimize downtime, you need to know about it as quickly as possible when it happens!

Share this post: